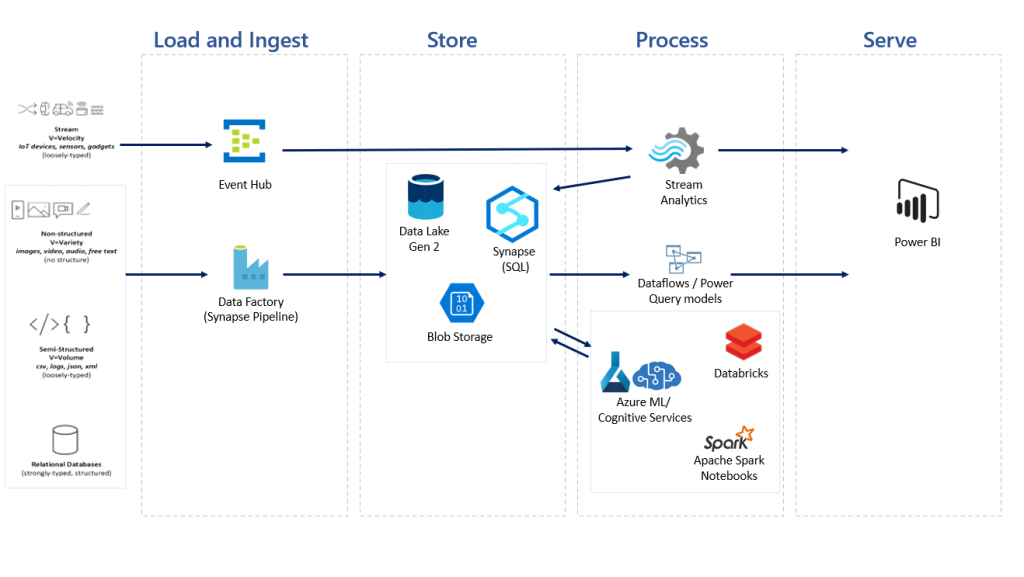

Data Flow stages can be defined at a high level as

Ingest -> Transform -> Store -> Analyze.

Data can be processed as Batch Process, which is not a real time processing of data, for example, one collects sales data for whole day and run analytics at the end of the day. Stream processing of data is near real time, where data is processed as it is recieved.

ELT vs ETL: Extract Load and Transform vs Extract Trandorm and Load. As the terms suggests, in ELT, data is loaded first to storage and than transformed. whereas in ETL data is transofrmed first and than loaded. In case of large amount of data, ETL will be difficult as processing large data in real time will be difficult, so ELT might be preffered.

Data Management in Cloud: Azure provides multiple solution for data flow. When choosing a solution one needs to take care of following aspects, Security, Storage Type (IaaS vs PaaS, Blob, File, Database, etc), Performance, Cost, redunancy, availabillity, etc.

Let’s take a look at some important solutions by Azure

Azure Data Lake Storage: Azure Data Lake is a scalable data storage and analytics service.

Azure Data Factory: Azure Data Factory is Azure’s cloud ETL service for scale-out serverless data integration and data transformation.

Azure Database Services: Azure provides various options for RDBMS and No-SQL database storage.

Azure HDInsight: an open-source analytics service that runs Hadoop, Spark, Kafka and more.

Azure DataBricks: Azure Databricks is a fast, easy and collaborative Apache Spark-based big data analytics service designed for data science and data engineering.

Azure Synapse Analytics: Azure Synapse Analytics is a limitless analytics service that brings together data integration, enterprise data warehousing and big data analytics. It gives you the freedom to query data on your terms, using either serverless or dedicated options—at scale.

Here is how a typical data flow look in Azure